Production Infrastructure

Technology

The Ganek Immersive Studio operates a fully in-house production, post-production, and real-time pipeline — from stereoscopic capture through to delivery on Apple Vision Pro and Meta Quest.

01

Capture

Stereoscopic production at the highest level.

Primary Camera

Blackmagic Cine Immersive

Our primary production camera for Apple Vision Pro content. Shoots native Apple Immersive Video in stereoscopic 180° — the same format used in Apple’s own immersive productions. Delivers the highest quality stereo 180 capture available for AVP delivery.

Secondary Camera

Canon R5C · Dual Fisheye Stereo

Paired with a dual fisheye stereo lens for flexible 180° and 360° capture. Used across a wide range of student productions and R&D projects where a lighter, more agile setup is required.

02

3D Pipeline

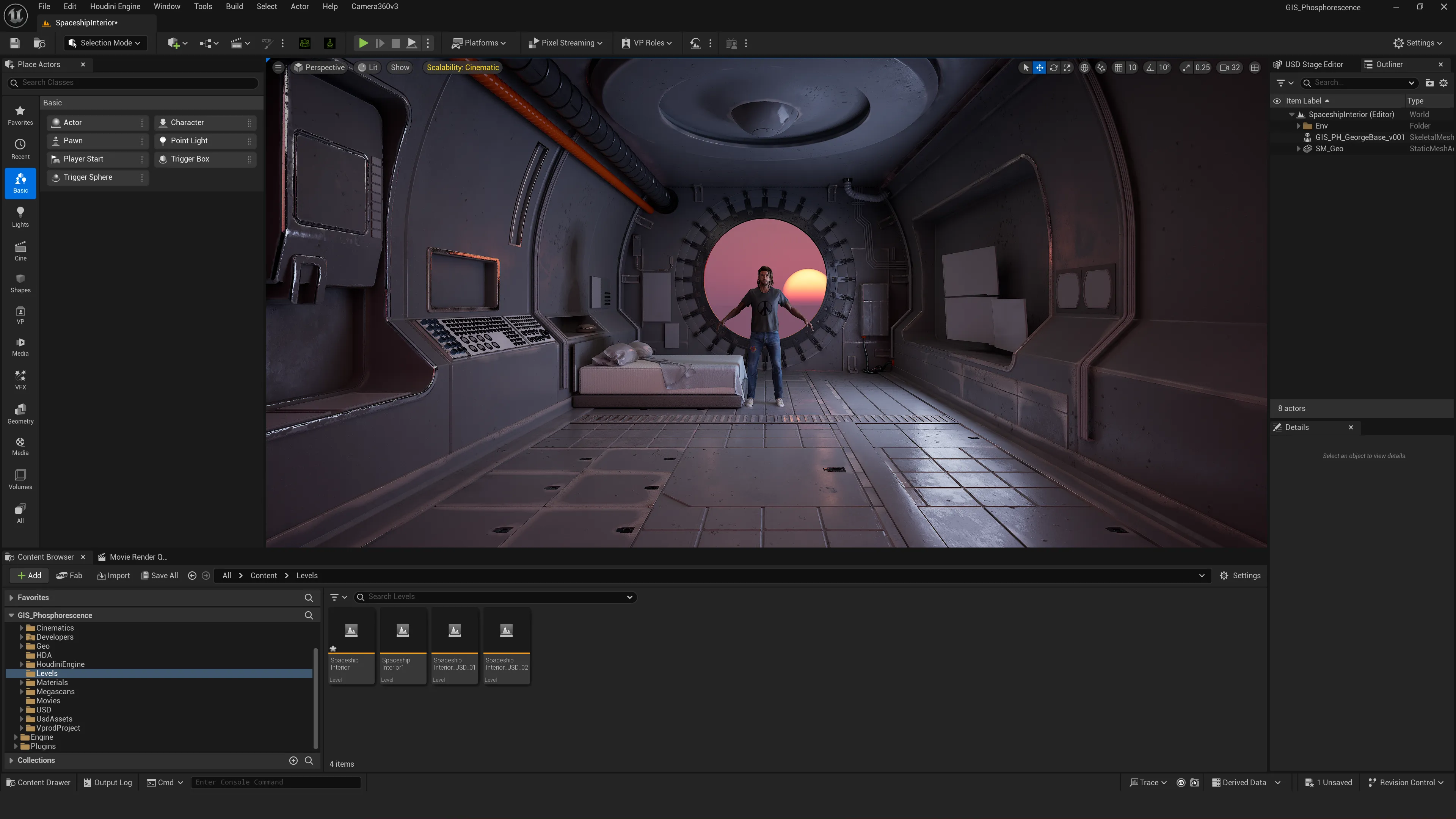

Houdini, USD, and Unreal at the core.

Our 3D content pipeline is built around Houdini and USD for procedural world-building and asset creation, feeding into Unreal Engine for real-time rendering and delivery. This gives us a highly flexible, non-destructive pipeline capable of pushing content to any XR platform.

Procedural 3D

Houdini + USD

Procedural modeling, FX, and world-building. USD as the interchange format across the entire pipeline for non-destructive, platform-agnostic asset delivery.

Real-Time Rendering

Unreal Engine + OWL

Unreal Engine for high-resolution 360 and stereo 180 rendering, powered by the OWL Live-Streaming Toolkit for real-time equirectangular, cubemap, stereo 180, and 360 camera output direct to render target.

Interactive / Generative

TouchDesigner + Unity

TouchDesigner for generative visuals, interactive installations, and real-time multimedia pipelines. Unity for cross-platform XR development and interactive project delivery.

03

Post Production

In-house compositing, stitching, edit, and color.

Every project goes through a fully in-house post-production workflow. From stereoscopic stitching to final color grade, we maintain complete control over the pipeline from shoot to delivery.

VR Stitching

Mistika VR

Industry-standard stereoscopic stitching and 360 post-production. Used for optical flow stitching, stereo alignment, and preparing immersive footage for compositing and delivery.

Compositing

Nuke

Node-based compositing for VFX-heavy productions. Used for integration of CG elements into live-action immersive footage, keying, and final image finishing across the stereo 180 and 360 pipeline.

Edit + Color

DaVinci Resolve

Primary editing and color grading platform across all productions. Used for both narrative edit and final color pipeline, with support for immersive video formats and high dynamic range delivery.

04

Volumetric + AI

Exploring the frontier of volumetric and AI-driven content.

A core strand of the studio’s R&D program is exploring emerging volumetric and AI workflows — Gaussian Splats, Pointclouds, and Photogrammetry for spatial capture, combined with AI image and animation tools for rapid development and visual exploration.

Volumetric Capture

Splats · Pointclouds · Photogrammetry

Gaussian Splatting for real-time radiance field rendering, LiDAR and structured light pointcloud capture, and photogrammetry for high-fidelity mesh reconstruction — all integrated into the Unreal and Houdini pipeline for XR delivery.

AI Tools

Image + Animation Generation

AI image generation tools including Stable Diffusion, ControlNet, MidJourney, and DALL-E are actively used in development and pre-production. AI animation research is an ongoing strand of the studio’s R&D program.

05

Output Platforms

Delivering to the leading XR platforms.

Productions are delivered to the two leading immersive headset platforms, with content mastered and optimized for each platform’s specific technical requirements.

Primary Platform

Apple Vision Pro

Our primary delivery platform. Productions shot on the Blackmagic Cine Immersive deliver native Apple Immersive Video — the highest quality spatial video format available. The studio produces content specifically optimized for the AVP’s unique display and rendering capabilities.

Secondary Platform

Meta Quest

Standalone VR delivery via Meta Quest. Used across student projects and interactive productions where broader accessibility and standalone deployment are priorities.

Visual

Storytelling

Technology in service of story.

Every tool in the pipeline exists to serve one goal — the most compelling immersive storytelling possible. Our technology stack is chosen and refined based on what produces the best creative results for students and staff working across every format we support.

See It In Action

Explore the projects built with this pipeline.

Every tool on this page has been used in production. See how students and staff have applied the technology across VR, AR, XR, and immersive formats.